Table of contents

Proofreading software gets teams most of the way there. But “most of the way” isn’t good enough when a missed deviation on a package insert can trigger a recall. Here are the four most common gaps — and why they matter for regulated workflows.

Most proofreading tools are built around a single assumption: that documents are text-based, share the same format, and exist in isolation. For general business writing, that assumption holds. For regulated industries managing packaging, labeling, and regulatory submissions, it breaks down quickly.

The result is teams that rely on tools that were never designed for their actual workload — and only discover the limitations when an error makes it through review.

1 – Treating documents as text — and ignoring everything else

Standard proofreading tools scan for text differences. That works well when the document is a straightforward written file. But regulated packaging and labeling aren’t just text — they’re complex compositions of text, images, pictograms, barcodes, tables, and structured layouts where every element carries meaning.

A generic tool might confirm that the product name is spelled correctly while completely missing that a warning pictogram was removed, a reading order changed, or a 1D barcode won’t scan. These aren’t cosmetic issues. For a medical device label or pharma package insert, they’re compliance failures.

Real risk: An unverified barcode or altered pictogram on packaging can reach print — and regulators — undetected.

2 – Generating no audit trail

Compliance is not just about catching errors — it’s about being able to prove you caught them. Regulatory bodies expect documentation: who reviewed what, when, what deviations were found, and how they were resolved. That record is the audit trail.

Generic tools like Word’s Compare feature or basic diff utilities produce on-screen results that disappear when the session closes. There is no automated report, no version history tied to the comparison, and no exportable record of the review. Teams using these tools are often rebuilding documentation manually after the fact — which introduces its own risk of error and takes time that adds up fast at scale.

Real risk: Without a complete audit trail, a review that happened may be impossible to demonstrate during an inspection or submission audit.

What is document comparison software in regulatory affairs?

Accurate comparison is critical when working across multiple documents, formats, and languages. Learn what document comparison software is and how it helps regulatory teams detect errors and ensure compliance.

3 – Ignoring the workflow around the comparison

A document comparison is rarely a single event. In regulated industries, content moves through multiple stages — from brief to draft, draft to translation, translation to artwork, artwork to print proof — with different teams, contractors, and systems at each step. Every handoff is a point where errors can enter.

Generic tools are standalone: you open two files, compare them, close the window. They have no concept of project templates, bulk processing, system integration, or multi-stage review workflows. Teams using them are managing the entire coordination layer manually — spreadsheets, email threads, and memory — which scales poorly and introduces the human errors the tool was meant to prevent.

Real risk: An error introduced at a translation handoff or during artwork revision may not be caught because the workflow has no automated checkpoint at that stage.

4 – Weak multilingual support

Global products require global labeling. A single product may need packaging verified in 20 or more languages, many of which the review team doesn’t speak. Generic proofreading tools have no way to verify a translated text against its source — they can compare character-by-character, but they can’t flag whether the meaning, structure, or regulatory language has changed.

This leaves teams either skipping language verification entirely, relying on bilingual colleagues who may not be available, or contracting external review for every language market. All of these approaches are slow, inconsistent, and hard to document in a way that satisfies regulators.

Real risk: A label in a regional language that includes an incorrect dosage instruction or missing contraindication may not be flagged before it reaches market.

Why these gaps compound each other

Individually, each of these limitations is a known trade-off teams learn to work around. Taken together, they create a verification process that is brittle at exactly the points where regulated industries can least afford it: high-volume periods, complex multilingual projects, and submissions where documentation must be airtight.

The pattern that emerges is predictable: teams start with generic tools, layer on manual compensations, and then — often after a near-miss or an actual error — look for a solution built for their actual workload.

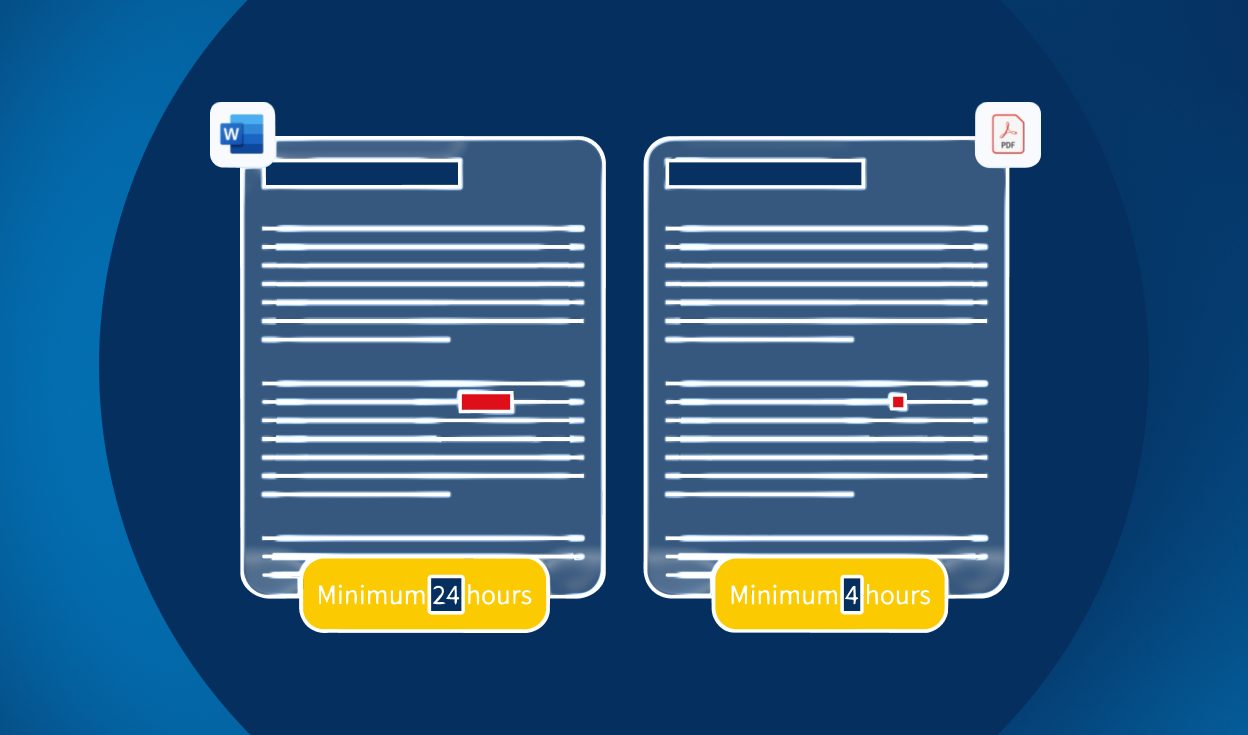

Common pattern: Many teams begin with Word or Adobe comparison features, then outgrow them when they need automated annotations, cross-format support, and exportable reports. The switch rarely happens before at least one painful manual workaround becomes unsustainable.

What compliance-ready verification actually looks like

A verification tool built for regulated teams should

- Compare files across formats — Word to PDF, brief to artwork, source to translation

- Detect deviations in text, images, formatting, barcodes, and reading order

- Generate a complete, exportable audit trail with version control

- Support any language without requiring a bilingual reviewer

- Integrate into existing artwork management, RIM, DMS, or PLM systems

- Handle bulk projects with configurable templates and automated annotation

- Minimize false positives so reviewers focus on real deviations

These aren’t advanced features — they’re the baseline for a tool that’s actually fit for purpose in a regulated environment. The gap between what generic tools provide and what this list describes is exactly the gap that leads to errors, delays, and compliance risk.

The takeaway

Proofreading software isn’t broken — it’s just solving a different problem. The question regulated teams need to ask isn’t “does this tool find errors?” but “does this tool find every error that matters, with the documentation to prove it?” Generic tools rarely answer yes to both. Specialized verification software exists precisely because that distinction matters.